Innovate to Mitigate greenhouse gases: Gaining skill in science practices through a problem-based learning challenge

Link to JSE March 2026 General Issue Table of Contents

Puttick et al JSE March 2026 General Issue PDF

Abstract: The Innovate to Mitigate project has adapted problem-based learning (PBL) for secondary-school students by posing open-ended design challenges and by including a crowdsourcing element to support systematic improvement of student designs. Students were charged with designing feasible innovative strategies to mitigate CO2 emissions. This paper reports on student learning of science practices as defined by the Next Generation Science Standards. The study draws on data from 15 teams of 8th-12th students who participated in the 2024 iteration of the Innovate to Mitigate competition. The competition was implemented in a range of science classrooms that included introductory environmental science, AP environmental science, general science, and physics. Mixed methods analysis reveals that the Innovate to Mitigate PBL learning environment resulted in significant gains in student learning of the practices. Implications for the successful implementation of PBL in a wide range of contexts include the need for iterative design, collaboration, critique, and public communications. These features supported students to design and evaluate investigations, construct evidence-based arguments, and engage in productive discourse, all essential skills for scientific literacy.

Keywords: Problem-based learning, science practices, climate change, collaborative competition

Introduction

While there is much literature about how problem-based learning environments support student engagement, persistence and learning (e.g., Strobel & Von Barneveld, 2009; Uluçinar, 2023), there is a dearth of studies about how students learn science practices in such environments, particularly when the design is open-ended and there are no specified learning goals (Puttick et al., 2022). Therefore, the primary goal of this study was to examine how the active participation of students aged 13-18 in the Innovate to Mitigate project supported gains in their knowledge of the practices of science as they developed methods for mitigating global climate change. In this article, we describe the results from one Innovate to Mitigate challenge that ran from October 2023 to April 2024.

Complex systems and climate change are key components of the Next Generation Science Standards (NGSS Lead States, 2013). Calls from scientists to address education and action in this arena are more urgent than ever (e.g., IPCC, 2023), and efforts are increasing to design effective learning environments that respond to such calls. In two meta-analyses of the effect of PBL on academic achievement (Strobel & Barneveld, 2009; Uluçinar, 2023), problem-based learning (PBL) that is student-centered, collaborative, and supports student agency has been found to be superior to standard instruction in long-term retention of student learning, as well as skill development (Savery, 2006; Hung et al, 2019; Wirkala & Kuhn, 2011). Importantly, Uluçinar points out that PBL is an epistemic model that is well suited for addressing socio-scientific issues (Uluçinar, 2023), such as climate change. In his analysis, he characterizes students’ “thinking processes” about socio-scientific issues as involving scientific problem-solving which supports both knowledge gains and acquiring scientific practices.

The Innovate to Mitigate challenges recruited teachers to engage their students in authentic science practices as part of a global effort to reduce emissions. The challenges engaged students in working independently in teams in an open-ended, goal-oriented way, while the parameters of the challenge and a suite of tools and resources structured the problem space (Puttick et al., 2022). The designed social structure supported students to take agency for their own learning as they engaged in real science with peers and with scientists (Puttick & Drayton, 2017; Drayton & Puttick, 2018; Puttick et al., 2022, Drayton et al., 2022, Puttick et al.,2023). In online sessions, students reciprocally critiqued and discussed project ideas, which inspired improvements and sometimes new directions. The online sessions drew on crowdsourcing to elicit the best thinking of participant teams as many real-world crowdsourcing efforts do (Puttick et al., 2024). Thus, the online sessions were framed for participants in terms of “collaborative competition.”

Authentic science learning involves developing fluency in both content and science practices. In an exploratory project funded by the National Science Foundation (grant # 1316225), we noted significant gains in students’ knowledge about climate change science and the science relevant to their own innovation (Puttick & Drayton, 2017) but we did not focus on the “formal” science practices as defined by the NGSS. This article aims to address that gap, as we answer the research question: How does participating in an Innovate to Mitigate challenge support students’ gains in the practices of science?

Background literature

Addressing climate change

Students need to understand the challenges that widespread impacts from climate change pose, and how communities across the United States are addressing both mitigation and adaptation. This understanding is crucial to better preparing them for future climate changes, and for job opportunities in the fast-growing areas of climate mitigation, climate adaptation, green energy generation and energy conservation (e.g., Henbest, 2018; Marcacci, 2015).

Mitigation is a high priority on the research agendas of many entities, for example, the National Academy of Engineering, which lists the development of carbon-sequestration methods as a Grand Challenge for Engineering. Enormous and inspiring advances in R&D in green technologies towards a carbon-neutral world are being made (e.g., Harvard, 2018; Pouraltafi-Kheljan et al., 2018; Akhurst, 2016). Exploration of these advances presents an opportunity for students to engage in authentic science investigations by taking an active role in addressing climate change, the largest collective action problem society currently faces (Coglianese, 2020).

While most middle school and secondary teachers recognize the importance of the NGSS, it can be difficult to implement the necessary “3D” learning (which includes the three strands in the NGSS – disciplinary core ideas, crosscutting concepts, and science practices) to engage students in addressing real-world problems (Snow & Dibner, 2016). Besides cognitive benefits (e.g., Cirkony, 2023), engagement in authentic scientific research also has the potential to increase science self–efficacy, the conviction that one can successfully engage in science (Chen & Usher, 2013). In addition, evidence is accumulating that acting to mitigate the impacts of climate change supports student agency, helps combat climate anxiety, and increases feelings of self-efficacy and hope (e.g., Mortreaux et al., 2023).

Problem-Based Learning

Several authors have described problem-based learning (PBL) as a context in which students tackle real-world problems, learning is student-centered, knowledge-building is collaborative, and emphasis is placed on using resources to formulate ideas and develop reasoning skills (e.g., Orey, 2012; Gorghiu et al., 2015; Uluçinar, 2023; Yew & Goh, 2016). Accounts of how learning takes place in PBL contexts have included studies of the relationships between the learning-oriented activities of students with their learning outcomes (e.g., Puttick & Drayton, 2017; Yew & Schmidt, 2009) and studies of student learning from the perspective of activity theory (e.g. Drayton & Puttick, 2018).

In a meta-analysis of the effect of PBL on academic achievement, Uluçinar (2023) reports evidence that is it superior to standard instruction in long-term retention of student learning, as well as skill development (Savery, 2006; Hung et al, 2019; Wirkala & Kuhn, 2011). These findings essentially echo those of an earlier meta-analysis conducted by Strobel & Barneveld (2009).

Uluçinar (2023) points out that PBL is an epistemic model that is suited for addressing socio-scientific issues by making it easier to relate science to everyday life and society. He characterizes students’ “thinking processes” as “activated by incorporating scientific problem-solving steps to solve an unsolved problem in a scenario within a research community. This process allows students to have conceptual knowledge about the problem and the necessary scientific problem-solving skills to acquire conceptual knowledge” (p. 80). Ideally, the teacher acts as facilitator or tutor, asking/modeling meta-cognitive questions to guide students (Strobel & Barneveld, 2009). In addition, opportunities for sustained engagement with a phenomenon have been shown to result in deep learning (Barron & Darling-Hammond, 2009; Darling-Hammond et al., 2015).

Essential principles of PBL have emerged from research findings reported in several meta-analyses (e.g., Savery, 2006; Smith et al., 2022; Strobel & Barneveld, 2009; Uluçinar, 2023). With respect to the problem space, these include: (a) problems are embedded in real world, rich contexts, and thus are of necessity interdisciplinary, (b) problems are ill-structured and allow for free inquiry, and (c) assessments, including self-assessment, measure student progress towards explicit goals of PBL. With respect to student participation, these include: (a) students are responsible for their own learning, that is, their work is based on intrinsic motivation, (b) collaboration is essential, and (c) students iteratively apply active and strategic metacognitive reasoning, and (d) students conduct a closing analysis and discussion of their learning.

The design of the Innovate to Mitigate challenges was guided by these principles (Puttick & Drayton, 2017; Drayton & Puttick, 2018). Students’ problem selection and solution were informed by a high level of authenticity in addressing climate change; student activities were aligned to real-world scientific practice or “science-as-practice” (Stroupe, 2014). Student self-assessment was conducted by critique and discussion within student groups, by peers in their own classrooms, and online with peers in classrooms at distant schools.

In the Innovate to Mitigate challenges, the problem space was at an extreme of open-endedness within PBL in that it was very broadly defined (Puttick et al., 2023). As a result, articulated content learning goals could not drive the process. Instead, we defined learning goals as the acquisition of skill in using the science practices (NGSS Lead States, 2013) to design, test and communicate about a mitigation prototype.

Science practices

A Framework for K–12 Science Education argues that the principal aim of science is to create and critique evidence-based causal accounts of natural phenomena (National Research Council, 2012). Further, the Frameworks suggest that science progresses through discourse within the community of scientists and thus emphasize that students should learn to communicate and argue about information and findings “clearly and persuasively” (National Research Council, 2012, p. 53). To support this, the Next Generation Science Standards (NGSS) have outlined “science practices” as a guide for reproducing authentic science learning in the classroom (NGSS Lead States, 2013). The vision describes a pedagogical approach in which students learn by actively using the practices to investigate phenomena, interrogate and analyze data, and reason scientifically to generate explanations supported by data.

Osborne (2014) asks how engaging in science practices improves science education. He writes, “Engaging in practice only has value if: (a) it helps students to develop a deeper and broader understanding of what we know, how we know and the epistemic and procedural constructs that guide the practice of science; (b) if it is a more effective means of developing such knowledge; and (c) it presents a more authentic picture of the endeavor that is science” (p. 183). Ford (2008) noted that students’ authentic construction of knowledge is dependent upon “critique,” given the centrality of critique to how knowledge is constructed in the work of scientists and establishes confidence in scientific claims.

PBL pedagogy incorporates NGSS-aligned 3D learning by definition. Ample evidence has established that certain practices, e.g., modeling, or argumentation, have contributed to the improvement of conceptual learning (e.g., Ford & Wargo, 2011; Schwarz et al., 2009; Stroupe, 2014; Hubber &Tytler, 2017) and supported higher student engagements (Ke & Schwarz, 2019). Our prior work has shown that students engaged in Innovate to Mitigate challenges made significant gains in knowledge about climate science and the science relevant to their own projects (Puttick & Drayton, 2017; Drayton & Puttick, 2018). We have also seen that students have experienced additional dimensions of science that have long been understood in the philosophy of science as essential elements of successful inquiry: the productive value of failure and the use of rhetoric (Puttick et al., 2024).

The Intervention

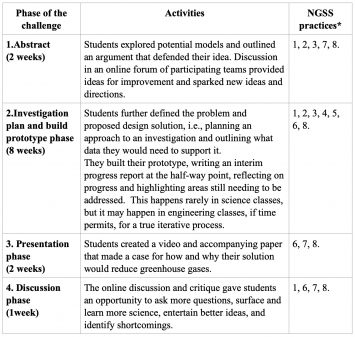

The 2023-4 challenge was conducted in four phases over a period of thirteen weeks: an Abstract phase, an Investigation Planning and Prototype Building phase, a Presentation phase, and a final Discussion phase (Table 1). The Phases align with the science practices as defined by the NGSS.

Table 1. Alignment of the NGSS science practices with phases of the Innovate to Mitigate challenge.

*Practices are numbered according to the NGSS description: 1. Asking questions (for science) and defining problems (for engineering), 2. Developing and using models, 3. Planning and carrying out investigations, 4. Analyzing and interpreting data, 5. Using mathematics and computational thinking, 6. Constructing explanations (for science) and designing solutions (for engineering), 7. Engaging in argument from evidence, and 8. Obtaining, evaluating, and communicating information.

As teams worked on the challenge, they were supported by their teacher with keeping on track, problem-solving, and logistical issues (Puttick et al., 2023). Materials provided for teacher and student use in each phase included rubrics, templates for notetaking and tracking progress, guidelines for producing final videos, and tips for engaging in constructive discourse online. The project website (https://www.terc.edu/innovatetomitigate/) features breaking stories —from news outlets, links to YouTube videos, and reports in popular science blogs— about exciting mitigation research projects, selected to inspire creativity and seed ideas.

Students’ videos and papers were posted to an online video forum where each was judged by a panel of scientists. Teams were awarded prizes for innovation, best video and paper presentation, and most engaged commenter in the two community comment periods.

Teacher professional learning to support Innovate to Mitigate PBL

We expected that teachers would need assistance in supporting PBL (Miller & Krajcik, 2019; Schwarz et al., 2017; Tucker-Raymond et al., 2020; Tytler et al., 2022) and in helping students to understand science as an “evidence based, model and theory building enterprise” (National Research Council, 2012). Teachers were familiarized with the challenge design in three virtual seminars at transition points during the challenge. Webinar 1 provided orientation to key components of the intended PBL model for student participation and the teachers’ stance as facilitator of student work (Puttick et al., 2024). Teachers discussed how to support students to rely on team members as resources, effectively supporting distributed expertise. They explored strategies, e.g., “productive talk,” (Michaels & O’Connor, 2021), for orienting students to the purpose of participant crowdsourced conversations to improve designs (Puttick et al., 2022; Drayton et al., 2022). Webinar 2 focused on the role of guide or coach to support distributed expertise (Puttick et al., 2023; Cassidy et al., 2020; Tucker-Raymond et al., 2021) and helping students select a project that best fit their capabilities and resources. Critical to their role in supporting student engagement was asking relevant questions (Boon, et al., 2022), for example, “How will [your prototype] work? How will you test it? What data will you need to support your argument? What are the disadvantages of your approach?” Webinar 3 focused on supporting student epistemic agency using “science-as-practice” (Stroupe, 2014). This involved a review of the NGSS practices and how they mapped to the stages of the challenge.

Methods

Data for this study come from the fifth iteration of the Innovate to Mitigate challenge in 2023-2024. The overall goal of the project was to create an alternative learning pathway in the ecosystem of school science that provided students the opportunity to experience and practice science as it is practiced and experienced in the real world. The goal of the present study was to determine how the Innovate to Mitigate challenges supported student learning. The research question is:

- How does participating in an Innovate to Mitigate challenge support students’ gains in the practices of science?

The authors designed the challenges and research instruments, provided scientific input in student discussion forums and served as judges, and conducted data analysis for this paper. The first author drafted the paper, with input from the other authors. Approval for our research methodology, data gathering procedures and publication of student data was granted by TERC’s Institutional Review Board.

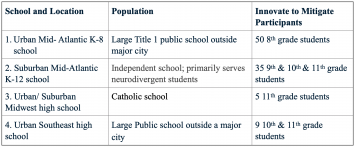

Participants

In Year 5 of the challenge, 99 students participated from 4 schools across the US and a range of classrooms including environmental science, physics, and general science (Table 2). Fifteen teams submitted videos and accompanying science papers.

Data sources

a. Pre/post survey

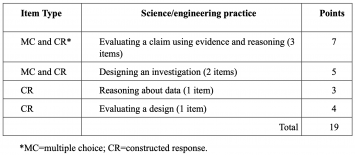

The pre/post assessment consisted of a combination of multiple choice and constructed response in seven items measuring science practices (Table 3 and Appendix 1). Five items were adapted from previously validated instruments; two were adapted from McNeill & Krajcik (2008), one from Zhou et al. (2015) and two from Mutch-Jones et al. (2012). The remaining two were designed by the project, both drawn from existing scientific data or from current mitigation efforts in the field. The assessment was given to all students participating in Year 5. The pre was implemented 1 week before the start of the challenge, and the post once the challenge was completed. Students completed the assessment using pen and paper during their regular class time.

b. Submissions

Student submissions consisted of a 2-minute video and a 1200-word paper in which they were asked to make a case for how and why their solution would reduce greenhouse gases.

c. Student interviews

A subset of 3 student teams was interviewed after the project; team availability was challenging because of end-of-year activities. The 30-45-minute interview included questions focused on student experience of the project, student learning, and teamwork.

Data Analysis

a. Assessment

A subset of assessments was coded by two researchers until a stable coding scheme for the practices was established. Thereafter, scoring of the assessments was completed by one researcher, with a second researcher coding a subset of 10% of the assessments. Disagreements comprised fewer than 5% of coding instances.

Of the 91 student responses received, 53 had complete pre/post responses (58.2%). The completion rate was much higher for high school students (33 complete responses; 76.6% completion rate) than eighth-grade students (20 complete responses; 41.7% completion rate).

A Cronbach’s alpha was run to measure internal consistency of the assessment. Based on the pre-test scores, Cronbach’s alpha for the entire set of 7 items was acceptable (α = 0.77). The reliability did not go up if any of the items were dropped. This suggests that these items all co-vary and that the assessment is internally consistent. Cronbach’s alpha was also measured for the two constructs with more than one item: argumentation (3 items) and investigation design (2 items). For the three argumentation items, Cronbach’s alpha was 0.59, and for the two design items, it was 0.2. This confirmed that there were not enough items in either construct to create a reliable measure (Litwin, 1995), although all seven items hung together fairly well. For this reason, the data were analyzed as a whole.

b. Submissions

We defined codes for the science practices in relation to the rubric that we provided to guide student work (see Appendix 2 for coding scheme). The rubric included guidance on designing an investigation, making a prediction about possible mitigation impact, constructing an evidence-based argument, and what counted as evidence. Students were also asked to describe the limitations of their design and to provide citations for any secondary sources they used. Finally, we also coded the overall soundness of the science (based on current scientific understandings) that supported their innovation.

Analysis was conducted in three phases. In the first, four researchers coded a subset of submissions individually then met to discuss all codes and come to agreement on a consensus code sheet. Thereafter, in the second phase, the corpus was divided and two pairs coded half of the submissions. Where there was disagreement within pairs, the four researchers met to discuss them before agreeing on how to resolve them. We believe, like Smagorinsky (2008), that the collaborative approach is generative and more likely to produce an insightful reading of the data “because each decision is the result of a serious and thoughtful exchange” (p. 402).

Results

a. Assessment

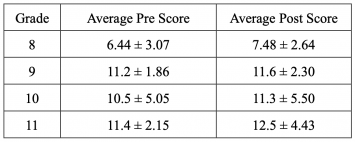

Altogether, there were positive gains in students’ science practices, as measured by the Innovate to Mitigate assessment, after participating in the intervention. High school students showed higher gains (change from pre to post in their overall score) than middle school students, although variation among gains was high. The biggest predictor of gains was student’s prescore: students with lower prescores tended to have higher gains.

On average, there was an increase in the score from pre (9.13±4.13) to post (9.83±4.34). For paired data, this was statistically significant according to a Student’s t-test with the alternative hypothesis that the postscores would be greater (t = 3.1872, df = 52, p = 0.001, 95% CI = 0.43, mean difference = 0.90). This suggests that the scores on the assessment did increase post-intervention.

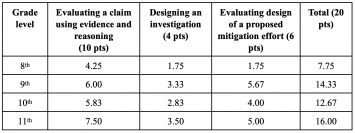

The average scores differed widely from 8th grade to 9th-12th grade (Table 4). The 8th graders had much lower pre and post scores than the high schoolers. Based on a Welch 2-sample T-test, this difference was statistically significant for both prescore (t = 5.13, df = 59, p << 0.001) and postscore (t = 4.69, df = 59, p << 0.001), with high schoolers having a higher pre and post score of 4-5 on average.

However, when comparing the average gains for middle vs high schoolers, there was no statistically significant difference per a Welch 2-Sample t-test (t = 0.817, df = 42, p = 0.42). Taken with the findings above, this suggests that all students had equal gains in their assessment scores after participating in the intervention, regardless of what prior knowledge (indicated by their pre-score) they came in with.

A simple linear regression was run to test whether gains varied based on prescore; e.g., whether students who had a lower prescore had a higher gain than their counterparts who already started with a fairly high score. The linear regression showed that gain was significantly predicted by prescore (R2 = 0.10, F(1,51 = 6.91, p = 0.01), such that students with lower prescores had a higher gain on the post assessment. This relationship was not dependent on grade level.

b. Submissions

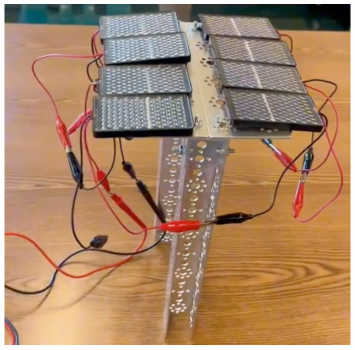

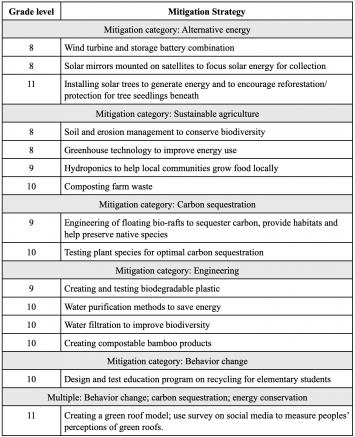

All but one of the submissions fell into a defined mitigation category, while one addressed multiple categories (Table 5). Categories included projects focused on improvements to existing alternative energy methods, while one in this category proposed “planting” solar trees to capture solar energy while providing a microclimate beneath them to encourage tree seedling growth (Figure 1).

Four projects targeted sustainable agriculture, while two tested biological methods for carbon sequestration. Four projects included some type of engineering design, e.g., creating biodegradable plastic or compostable bamboo products. The winning entry designed a green roof using Sedum spp. and also conducted a survey via social media to research their peers’ attitudes about green roofs (Cooper & Adamo, 2024).

The range of scores for the submissions followed a similar pattern to the pre/post survey responses; 11th graders scored the highest on some science practices, while 8th graders scored the lowest (Table 6).

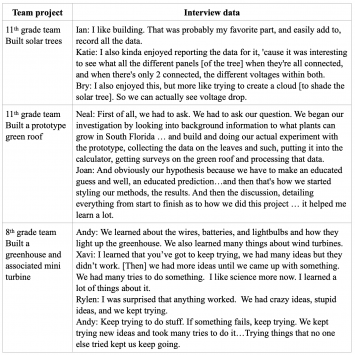

c. Interviews

One 8th and two 11th grade teams were interviewed after their projects were completed. Students in all three teams responded positively about what they had learned from engaging in their Innovate to Mitigate project (Table 7).

Table 7. Excerpts from responses of two 11th grade teams to an interview question, “What did you learn about science practices?” Student names are pseudonyms.

Discussion

Many features of the Innovate to Mitigate competition align with features of classroom learning environments known to be effective and engaging (Puttick & Drayton, 2017; Drayton & Puttick, 2018). For example, researchers have established that involvement in an engineering design process makes authentic practices accessible to learners (Bernstein et al., 2022; Edelson & Reiser, 2006; Wendell et al., 2017), while opportunities for sustained engagement with a phenomenon result in deep learning (Barron & Darling-Hammond, 2009; Darling-Hammond et al., 2015). In addition, researchers have shown that opportunities to reason about and communicate scientific findings support deeper understanding of complex phenomena (e.g., Krajcik & Czerniak, 2018; McNeill & Krajcik, 2008; Stewart et al., 2005). All these features are characteristic of problem-based learning (Ravitz, 2009; Krajcik & Shin, 2014; Miller & Krajcik, 2019; Wirkala & Kuhn, 2011; Yew & Goh, 2016) – and of mature scientific practice. Indeed, the expressed goal of science competitions is to engage students in authentic science.

Research data from prior competitions have shown gains in student learning of science content (Puttick & Drayton, 2017; Drayton & Puttick, 2018). For example, Findings from the present study indicate that middle and high school students participating in the Innovate to Mitigate project showed significant gains in science practices. The biggest predictor of gains was student’s prescore: students with lower prescores tended to have higher gains. Results also indicate that students with lower initial scores in science practices seemed to benefit the most from their participation in the Innovate to Mitigate project. We surmise that the ways in which the open-ended, PBL approach supported students to have meaningful interactions as they engaged in science practices – while working on projects that had personal, real life, implications for them – likely supported these outcomes.

Student teams crossed disciplinary boundaries as their free choice resulted in projects based in chemistry, engineering, mathematics or biology to address the mitigation challenge. Projects included a wide diversity of topics ranging from adapting designs for solar trees, to designing a model green roof and conducting surveys on social media to assess attitudes towards green roofs. Ratings from qualitative scoring of student submissions were lowest for the 8th grade students and highest for the 11th graders. We posit that these differences could reflect high schoolers’ increased opportunities for exposure to and opportunities for engaging in science practices.

While we cannot claim a causal connection between project- and teacher-provided support, it is possible that the project’s efforts to promote student inquiry by equipping teachers with evidence-based tools that promote PBL techniques had benefits for students of all ages. The project provided materials for student use, e.g., rubrics, templates for notetaking and tracking progress, guidelines for producing final videos, and tips for engaging in constructive discourse online. In addition, 3 1-hour professional learning sessions on Zoom included orientation to key components of the PBL model, the teachers’ stance as facilitator of distributed expertise on student teams (Puttick et al., 2023), and strategies, e.g., “productive talk,” (Michaels & O’Connor, 2021), for supporting students to improve their and others’ designs during participant crowdsourced conversations (Puttick et al., 2022). In addition, we reviewed with teachers the NGSS practices and how they mapped to the stages of the challenge.

Overall, we found that the basic design of the challenges was sound. However, the biggest evolution of the challenges over the several years we implemented them was in timing and duration to accommodate teachers’ calendars and course schedules. We needed to make trade-offs between staging semester-long challenges in the spring semester, versus having them run over several months so that students could more fully immerse themselves in full conception, building and testing of their prototypes.

The Innovate to Mitigate project brings to light a methodological gap: the apparent lack of established, reliable assessments of science practices, as described by the NGSS. While our assessment showed internal consistency, and items co-varied, the limited number of items per practice construct constrained our ability to draw stronger conclusions about gains in students’ science practices. Future work should focus on the development of validated instruments to assess learning of the science practices in PBL contexts. Such instruments will also be more broadly useful given the wide adoption of “three-dimensional” learning (NGSS Lead States, 2013).

Conclusion

Results from this study contribute to a growing body of research about how students learn science practices in authentic, design-based science learning environments, particularly when the design is open-ended and there are no specified learning goals (Puttick et al., 2022). Emphasizing iterative design, collaboration, critique, and public communication, the Innovate to Mitigate model affirms the essential principles of PBL that have emerged from several meta-analyses (e.g., Savery, 2006; Smith et al., 2022; Strobel & Barneveld, 2009; Uluçinar, 2023) that: (a) students are responsible for their own learning, that is, their work is based on intrinsic motivation, (b) collaboration is essential, and (c) students iteratively apply active and strategic metacognitive reasoning, and (d) students conduct a closing analysis and discussion of their learning.

Limitations

Although students’ assessment gains were significant, the small sample size, especially for high school students, means that the results of this study are merely suggestive. Further work will need to be done to demonstrate the value of this particular kind of learning environment. Furthermore, as already mentioned, the assessment instrument that was used limited our ability to make claims about student learning of individual science practices. Finally, we were unable to gather data on the four different disciplinary contexts in which the project was implemented. Consequently, context variability is likely to be reflected in student outcomes.

Acknowledgments

We wish to thank the teachers and students who participated in this project. Thanks also to Reneé Pawlowski, Sara Lacy and our project advisors for their contributions to the project. This work was funded by the National Science Foundation grant #1908117. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.

References

Akhurst, A. (2016). How algae could change the fossil fuel industry. Accessed Nov 1 2016 from http://www.seeker.com/how-algae-could-change-the-fossil-fuel-industry-2022553403.html

Barron, B and Darling-Hammond, L. (2009). Powerful Learning: Studies Show Deep Understanding Derives from Collaborative Methods. Retrieved from https://www.edutopia.org/inquiry-project- learning-research.

Bernstein, D., Puttick, G., Wendell, K., Shaw, F., Danahy, E., Cassidy, M. (2022). Designing biomimetic robots: iterative development of an integrated technology design curriculum. Educational Technology Research and Development, 70,119-147. https://doi.org/10.1007/s11423-021-10061-0

Boon, M., Oroszco, M., & Sivakumar, K. (2022). Epistemological and education issues in teaching practice-oriented scientific research: roles for philosophers of science. European Journal for Philosophy of Science, 12, 16. https://doi.org/10.1007/s13194-022-00447-z

Cassidy, M., Tucker-Raymond, E., & Puttick, G. (2020). Distributing expertise to integrate computational thinking practices. Science Scope, 43(7), 18-21.

Chen, J.A. & Usher, E.L. (2013). Profile of the sources of science self-efficacy. Learning and Individual Differences 24, 11-21.

Cirkony, C. (2023). Flexible, creative, constructive, and collaborative: the makings of an authentic science inquiry task, International Journal of Science Education, 45,1440-1462. DOI: 10.1080/09500693.2023.2213384

Coglianese, C. (2020). Climate Change Necessitates Normative Change. The Regulatory Review – Opinion. 460. https://scholarship.law.upenn.edu/regreview-opinion/460

Cooper, O’N., & Adamo, A. (2024). Innovate to Mitigate: The Impact of Social Media on Green Roofs. Hands On! Magazine Fall 2024

Darling-Hammond., L., Brigid Barron, B., Pearson, P.D., Schoenfeld, A.H., Stage, E.K., D Zimmerman, T.D. Cervetti, G.N., Tilson, J.L. (2015). Powerful learning: What we know about teaching for understanding. John Wiley & Sons.

Drayton, B., Puttick, G., & Gasca, S. (2022, April 21-26). Microgenesis of Student Design and Rationale in a Crowdsourcing Competition [Conference presentation]. American Educational Research Association. https://doi.org/10.3102/1883133

Drayton, B. & Puttick, G. (2018). Innovate to Mitigate: Learning as activity in a team of high school students addressing a climate mitigation challenge. Sustainability in Environment 3, 1-25.

Edelson, D. C., & Reiser, B. J. (2006). Making authentic practices accessible to learners: Design challenges and strategies. In R. K. Sawyer (Ed.), Cambridge Handbook of the Learning Sciences. (pp. 335-354). New York: Cambridge University Press.

Ford, M. J. (2008). Disciplinary authority and accountability in scientific practice and learning. Science Education, 92(3), 404–423.

Ford, M. J., & Wargo, B. M. (2011). Dialogic framing of scientific content for conceptual and epistemic understanding. Science Education, 96(3), 369–391.

Gorghiu, G., Drǎghicescu, L.M., Cristea, S., Petrescu, A-M., & Gorghiu, L.M. (2015). Problem-based learning – An efficient learning strategy in the science lessons context. Procedia – Social and Behavioral Sciences, 11, 1865-1870.

Harvard University. (2018, November 8). Transforming carbon dioxide into industrial fuels. ScienceDaily. Retrieved November 11, 2018 from www.sciencedaily.com/releases/2018/11/181108130533.htm

Henbest, J. (2018). New Energy Outlook 2018. Bloomberg NEF. Accessed Oct 2018 from https://about.bnef.com/new-energy-outlook/#toc-download

Hubber, P., & Tytler, R. (2017). Enacting a representation construction approach to teaching and learning. In: Treagust, D.F., Duit, R., & Fischer, H.E. Multiple Representations in Physics Education (pp. 139-161). Springer Nature.

Hung, W., Dolman, H.J.M., & van Merriënboer, J.G. (2019). A review to identify key perspectives in PBL meta‑analyses and reviews: trends, gaps and future research directions. Advances in Health Sciences Education, 24, 943-957.

IPCC – Intergovernmental Panel on Climate Change (2023). The Synthesis Report of the Intergovernmental Panel on Climate Change. Sixth Assessment Report. New York NY: United Nations.

Ke, L., & Schwarz, C.V. (2019). Using Epistemic Considerations in Teaching: Fostering Students’ Meaningful Engagement in Scientific Modeling. In: Upmeier zu Belzen, A., Krüger, D., van Driel, J. (eds) Towards a Competence-Based View on Models and Modeling in Science Education. Models and Modeling in Science Education, vol 12 (pp. 181-199). Springer, Cham. https://doi.org/10.1007/978-3-030-30255-9_11

Krajcik, J., & Czerniak, C.M. (2018). Teaching science in elementary and middle schools: A project-based learning approach. 5th Ed. New York: Routledge.

Krajcik, J. & Shin, N. (2014). Project-Based Learning. In Sawyer, K. (Ed.) The Cambridge handbook of the learning sciences, 2nd Ed (pp. 275-297). New York NY: Cambridge University Press.

Litwin, M. S. (1995). Validity. In Validity (pp. 33-46). SAGE Publications, Inc., https://doi.org/10.4135/9781483348957.n3

Marcacci, S. (2015). 1.2 Million US Green Jobs Reported in Q1. Accessed Oct 2018 from https://cleantechnica.com/2015/06/05/1-2-million-us-green-jobs-reported-q1-heres-thats-problem/

McNeill, K., & Krajcik, J. (2008). Assessing middle school students’ content knowledge and reasoning through written scientific explanations. Washington DC: National Science Teachers Association Press.

Michaels, S., & O’Connor, C. (2021) Talk Science primer. Cambridge: TERC. https://inquiryproject.terc.edu/shared/pd/TalkScience_Primer.pdf

Miller, E.C., & Krajcik, J.S. (2019). Promoting Deep Learning Through Project-Based Learning: a Design Problem. Disciplinary and Interdisciplinary Science Education Research 1, 1–10. doi:10.1186/s43031-019-0001-1

Mortreux, C., Barnett, J., Jarillo, S., & Greenaway, K. (2023). Reducing personal climate anxiety is key to adaptation. Nature Climate Change, 13, 590. https://doi.org/10.1038/s41558-023-01716-2

Mutch-Jones, K., Puttick, G., Minner, D. (2012). Lesson Study for Accessible Science: Building expertise to improve practice in inclusive science classrooms. Journal of Research on Science Teaching. 49(8), 1012-1034.

National Research Council. (2012). A Framework for K-12 Science Education: Practices, Crosscutting Concepts, and Core Ideas. Washington, DC: The National Academies Press. https://doi.org/10.17226/13165.

NGSS Lead States. (2013). Next Generation Science Standards: For states, by states. Washington, DC: The National Academies Press.

Orey, M. (2010). Emerging perspectives on learning, teaching, and technology. Available at https://textbookequity.org/Textbooks Orey_Emergin_Perspectives_Learning.pdf

Osborne, J. (2014). Teaching scientific practices: The challenges of change. Journal of Science Teacher Education, 25, 177-196. DOI 10.1007/s10972-014-9384-1

Pouraltafi-Kheljan, S., Azimi, A., Mohammadi-Ivatloo, B. & Rasouli, M. (2018). Optimal design of wind farm layout using a biogeographical based optimization algorithm. Journal of Cleaner Production 201, 1111-1124.

Puttick, G., Drayton, B., & Gasca, S. (2024). Open Innovation Challenge to Mitigate Global Warming. Connected Science Learning, 5(5). https://doi.org/10.1080/24758779.2023.12318609

Puttick, G., Drayton, B., & Gasca, S. (2023, June 10-15). Innovate to Mitigate: Teacher role in a student competition [Conference presentation]. International Society of the Learning Sciences, 1818-1819. https://repository.isls.org/bitstream/1/10035/1/ICLS2023_1817-1818.pdf

Puttick G, Drayton B, Gasca S. (2022) Innovate to Mitigate: Analysis of student design and rationale in a crowdsourcing competition to mitigate global warming. In Chinn, C., Tan, E., Chan, C., & Kali, Y. (Eds.). (2022). Proceedings of the 16th International Conference of the Learning Sciences-ICLS2022. https://repository.isls.org/bitstream/1/8929/1/ICLS2022_1141-1144.pdf

Puttick, G. & Drayton, B. (2017). Innovate to Mitigate: Science learning in an open-innovation challenge for high school students. Sustainability in Environment, 2, 389-418.

Ravitz, J. (2009). Introduction: Summarizing Findings and Looking Ahead to a New Generation of PBL Research. Interdisciplinary Journal of Problem-based Learning, 3, 4-11.https://doi.org/10.7771/1541-5015.1088S

Savery, J.R. (2006). Overview of problem-based learning: Definitions and distinctions. Interdisciplinary Journal of Problem-based Learning, 1(1). https://doi.org/10.7771/1541-5015.1002

Schwarz, C. V., Cynthia Passmore, C., & Reiser, B.J. (2017). Helping Students Make Sense of the World Using Next Generation Science and Engineering Practices. Arlington VA: NSTA Press.

Schwarz, C.V., Reiser, B.J., Davis, E.A., Kenyon, L., Achér, A., Fortus, D., Shwartz, Y., Hug, B., & Joe Krajcik, J. (2009). Developing a Learning Progression for Scientific Modeling: Making Scientific Modeling Accessible and Meaningful for Learners. Journal of Research in Science Teaching, 46, 632–654.

Smagorinsky, P. (2008). The Method Section as Conceptual Epicenter in Constructing Social Science Research Reports. Written Communication, 25(3), 389–411. https://doi.org/10.1177/0741088308317815

Smith, K., Maynard, N., Berry, A., Stephenson, T., Spiteri, T., Corrigan, D., Mansfield, J., Ellerton, P., & Smith, T. (2022). Principles of Problem-Based Learning (PBL) in STEM Education: Using Expert Wisdom and Research to Frame Educational Practice. Education Sciences, 12(10), 728. https://doi.org/10.3390/educsci12100728

Snow, C.E. and Dibner, K.A. (Eds.). (2016). Science Literacy: Concepts, Contexts, and Consequences. Committee on Science Literacy and Public Perception of Science; Board on Science Education; Division of Behavioral and Social Sciences and Education; National Academies of Sciences, Engineering, and Medicine. Washington, DC: National Academies.

Stewart, J. L., Cartier J., & Passmore C. (2005). Developing Understanding Through Model-Based Inquiry. In How Students Learn: History, Mathematics, and Science in the Classroom., 515–565. Washington, DC: The National Academies Press.

Strobel, J. & Barneveld, A. (2009). When is PBL more effective? A meta-synthesis of meta-analyses comparing PBL to conventional classrooms. Interdisciplinary Journal of Problem-Based Learning, 3(1).

Stroupe, D. (2014). Examining classroom science practice communities: How teachers and students negotiate epistemic agency and learn science-as-practice. Science Education, 98(3), 487-516.

Tucker-Raymond, E., Cassidy, M. & Puttick, G. (2021). Science teachers can teach computational thinking through distributed expertise. Computers and Education 173. https://doi.org/10.1016/j.compedu.2021.104284

Tytler, R., Prain, V., Kirk, M., Mulligan, J., Nielsen, C., Speldewinde, C., … & Xu, L. (2022). Characterising a representation construction pedagogy for integrating science and mathematics in the primary school. International Journal of Science and Mathematics Education, 21(1), 1–23.

Uluçinar, U. (2023). The effect of problem-based learning in science education on academic achievement: A meta-analytical study. Science Education International, 34, 72-85.

Wendell, K.B., Wright, C.G., & Paugh, P. (2017). Reflective decision-making in elementary students’ engineering design. Journal of Engineering Education, 106, 356-397.

Wirkala, C., & Kuhn, D. (2011). Problem-based learning in K-12 education. Is it effective and how does it achieve its effects? American Education Research Journal, 48(5), 1157-1186.

Yew, E.H.J., & Goh, K. (2016). Problem-Based Learning: An Overview of its Process and Impact on Learning. Health Professions Education, 2, 75-79. https://doi.org/10.1016/j.hpe.2016.01.004.

Yew, E.H.J., &. Schmidt H.G. (2009). “Evidence for constructive, self-regulatory, and collaborative processes in problem-based learning.” Advances in health sciences education, 14, 251-273.

Zhou, S., Han, J., Koenig, K., Raplinger, A., Pi, Y., Li, D., Xiao, H., Fu, Z., & Bao, L. (2016). Assessment of Scientific Reasoning: the Effects of Task Context, Data, and Design on Student Reasoning in Control of Variables. Thinking skills and creativity, 19, 175–187. https://doi.org/10.1016/j.tsc.2015.11.004

Gillian Puttick is a senior scientist at TERC, a nonprofit education foundation in Cambridge, MA. An ecologist by training, her research and development work focuses primarily on ecology, environmental science, and climate change education.

Gillian Puttick is a senior scientist at TERC, a nonprofit education foundation in Cambridge, MA. An ecologist by training, her research and development work focuses primarily on ecology, environmental science, and climate change education.  Kathryn Hobbs is a former high school biology teacher and has been a researcher at TERC for over 20 years. Her current research is focused on teachers who are designing and integrating interdisciplinary thinking into their middle school classrooms.

Kathryn Hobbs is a former high school biology teacher and has been a researcher at TERC for over 20 years. Her current research is focused on teachers who are designing and integrating interdisciplinary thinking into their middle school classrooms.  Santiago Gasca is a researcher and evaluator at TERC. He collaborates with middle and high school teachers to increase student engagement in STEM. His work focuses on empowering learners through student-centered pedagogies.

Santiago Gasca is a researcher and evaluator at TERC. He collaborates with middle and high school teachers to increase student engagement in STEM. His work focuses on empowering learners through student-centered pedagogies.  Brian Drayton was a senior scientist, now consultant, at TERC. His work has included curriculum development in physical and life sciences, especially ecology; research on inquiry in science teaching and learning; research and development on electronic communities; and climate change education.

Brian Drayton was a senior scientist, now consultant, at TERC. His work has included curriculum development in physical and life sciences, especially ecology; research on inquiry in science teaching and learning; research and development on electronic communities; and climate change education.  Jessica Karch is a Senior Researcher and Evaluator at TERC. She uses qualitative and mixed methods to study science learning and learning environments, with a focus on equity, sociocultural, and asset-based frameworks.

Jessica Karch is a Senior Researcher and Evaluator at TERC. She uses qualitative and mixed methods to study science learning and learning environments, with a focus on equity, sociocultural, and asset-based frameworks.